How Word Beam Improves HTR Accuracy

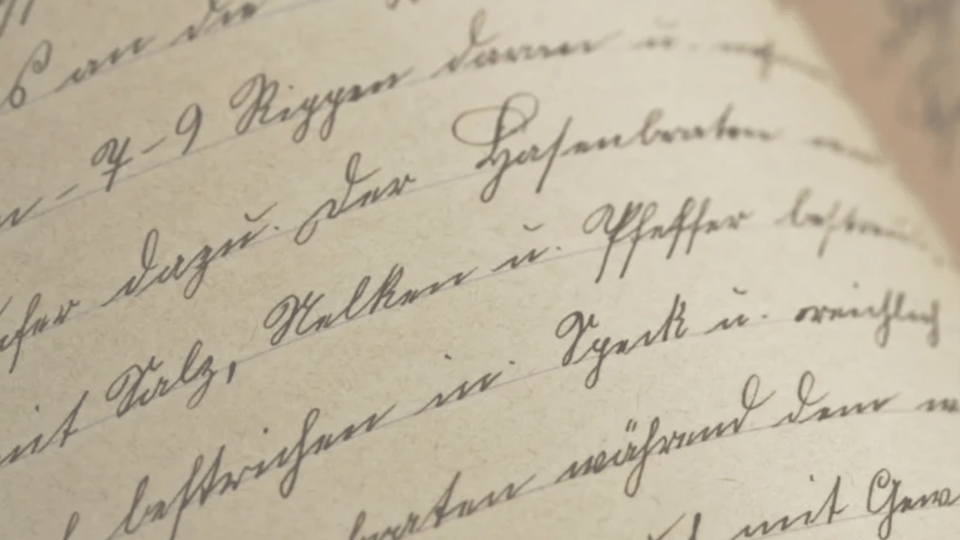

Handwritten Text Recognition (HTR) has evolved rapidly over the last decade. With the rise of deep learning, we have moved from simple segment-based recognition to sophisticated end-to-end neural networks. However, even the most advanced models still struggle with the inherent "ambiguity" of human handwriting. Slanted letters, varying stroke widths, and cursive connections often lead to visual confusion that even a powerful GPU cannot fully resolve.

A neural network might correctly identify the shapes in a word recognizing a sequence that looks like "cl" but still fail to output a readable result, perhaps outputting "d" instead. This gap between visual recognition and linguistic sense is where Word Beam (WB) becomes an essential part of the tech stack. In this post, we’ll explain how Word Beam works, why it is superior to standard decoding, and how it reduces error rates in professional OCR and HTR applications.

The Problem with CTC Decoding

To understand why Word Beam is necessary, we must look at how modern HTR systems are trained. Most models use Connectionist Temporal Classification (CTC). CTC is a neural network layer and loss function that allows the model to predict sequences of characters without needing each character in the training image to be perfectly labeled with an exact horizontal position.

While CTC is brilliant for training, it presents a challenge during "inference" when the model is actually reading new text. To turn the network's raw mathematical probabilities into actual text, many systems use Best Path Decoding (also known as Greedy Decoding).

Best Path Decoding Fails

Best Path Decoding is the simplest approach. It picks the single most likely character for every vertical slice of an image. If the AI is 51% sure a character is a "0" and 49% sure it is an "o," it picks the "0."

This method lacks "common sense." It doesn't check if the resulting characters form a real word. This is why raw OCR output is often full of "word-like" nonsense, such as:

- "h3llo" instead of "hello"

- "rnodern" instead of "modern"

- "clata" instead of "data"

Because Best Path Decoding evaluates each character in a vacuum, a single pixel of noise can derail an entire word, leading to high Word Error Rates (WER) that make the data useless for search or automation.

What is Word Beam?

WordBeam is a decoding algorithm designed to sit between the neural network and the final output. Its primary job is to restrict the AI’s output to a specific lexicon while maintaining the flexibility to recognize non-dictionary items like numbers.

For developers using translation memory tools or a CAT translation tool, WordBeam acts as a quality gate. Unlike the "greedy" approach, it explores multiple paths simultaneously this is the "Beam."

The Three Core Components of WB

- Dictionary Validation - As the beam expands, WB constantly checks the evolving string against a list of known words. If the AI starts a word with "Xy...", and no words in your dictionary start with "Xy", that path is penalized or discarded in favor of a more linguistically probable one.

- Prefix Trees (Tries) - Searching a dictionary of 100,000 words for every single character would be incredibly slow. WB solves this using a "Trie" structure. This tree-like data structure allows the algorithm to instantly see which characters are "legal" next steps for any given prefix.

- Language Model Smoothing - WB isn't just a rigid filter. It uses smoothing techniques to balance what the AI "sees" (visual probability) with what the dictionary says is "legal." If the visual evidence for a non-dictionary word is overwhelming like a unique proper noun a well-configured WB can still allow it to pass.

Key Benefits for Developers

Integrating Word Beam into your HTR or OCR pipeline offers several tangible technical and business advantages.

1. Lower Word Error Rate (WER)

The most immediate impact of WB is the jump in accuracy. In many HTR benchmarks, switching from Best Path Decoding to Word Beam can reduce the Word Error Rate significantly. By preventing the AI from "inventing" new, misspelled words, the output becomes instantly more searchable and readable for end-users.

2. Handling Out-of-Vocabulary (OOV) Tokens

A common criticism of dictionary-based decoders is that they cannot handle dates, phone numbers, or currency. Word Beam is designed with "modes" to solve this. You can configure it to use a dictionary for standard words but switch to a "free-form" mode for numbers and punctuation. This hybrid approach ensures you get the benefits of a dictionary without losing the ability to capture specific data points.

3. Real-Time Efficiency

Checking a dictionary constantly might seem slow, but because Word Beam uses optimized C++ implementations and Trie-based lookups, it is remarkably efficient. It performs these checks during the decoding phase, meaning you don't need a separate, slow post-processing step to fix typos after the fact. This makes it suitable for real-time applications, such as mobile scanning apps.

4. Domain-Specific Customization

WB allows developers to swap lexicons based on the use case. If you are building an HTR tool for medical professionals, you can load a medical dictionary. If you are processing legal documents, you can load a legal lexicon. This "pluggable" nature makes the HTR engine significantly more versatile than a generic OCR tool.

Comparison Decoding Methods at a Glance

| Feature | Best Path (Greedy) | Vanilla Beam Search | Word Beam |

| Logic | Highest probability per slice | Top N paths (character-based) | Top N paths (lexicon-constrained) |

| Dictionary | No | No | Yes |

| Accuracy | Low | Moderate | High |

| Best For | Fast prototyping | CAPTCHAs | Full sentences / Documents |

Key Takeaways for HTR Accuracy

In the competitive landscape of document digitization, accuracy is the only metric that truly matters. If a human has to manually correct every third word an AI transcribes, the cost-savings of automation vanish. Standard CTC decoding is a great start, but it isn't enough for professional-grade HTR. Word Beam provides the necessary linguistic guardrails to turn raw, ambiguous neural network data into precise, usable text. By combining the visual power of deep learning with the structural reliability of a lexicon, WB ensures that your HTR system doesn't just see it understands.